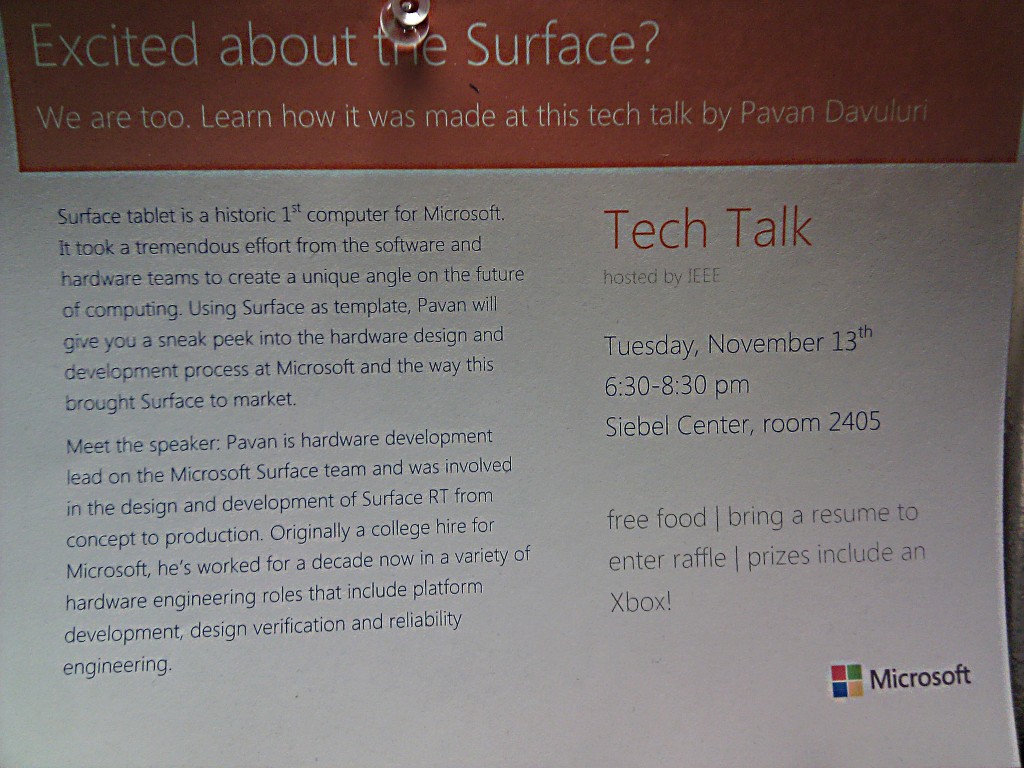

Last November (November 2, 2012) was Facebook’s first ever Chicago Regional Hackathon (hosted at UIUC). The week before, a group of us from UIUC (David, Jay, Xander, and myself; all freshman in CS) decided that we were going to participate and hopefully build something! Now two months after the Hackathon, as we’re looking through the code we wrote then, we notice a lot of interesting patterns. But first lets start at the beginning… 48 hours before the Hackathon was set to begin, we realized we still didn’t have any idea of what we were going to build. We met briefly as a team to brainstorm and decided that we would all have to come up with ideas during the next two days and then decide at the Hackathon itself. 24 hours before the Hackathon, we still hadn’t come up with anything and I was starting to get worried that we wouldn’t have anything by the time we were supposed to start. But luck was on our side – with just about 5 hours to go before the official start time, Jay came up with something awesome. While walking around the Siebel Center for Computer Science, he had seen this poster for a Microsoft Tech Talk about the Surface tablet:

After seeing the poster, he wanted a way to remember it and didn’t want to manually type in all the information in to his Google Calendar. And suddenly we had an awesome Hackathon project – make an Android app that lets you take a picture of an event flyer and have it enter the details onto the phone’s default Calendar. After a little bit of thought about how we would get the picture through OCR software, we began setting up our dev environments.

Three of us had to setup Eclipse 4.2 (Juno) with Github access and the Android SDK. Just the Android SDK download itself takes close to an hour per computer (We were compiling for Android SDK version 16 with minimum SDK version 8). Unlike the rest of us, Xander prefers to develop from his Arch Linux environment (which for some reason couldn’t install the ADT plugin for Eclipse). This left him in his preferred environment anyways (emacs). With just an hour to go before the hackathon, we had everything setup to build our Android App and began to move our gear over to the Siebel Center.

One of the smartest things we did was bring all of the gear we thought we would need. We brought our own power strip, ethernet cables, and a 5 port network switch. This ended up being one of the smartest decisions we made as we had a rock solid internet connection (needed to keep pulling/pushing from Github) while other groups were struggling with the Wi-Fi (500 consistent users does tax any Wi-Fi implementation). As I was working with my Lenovo X120e (11.6″ screen, 3 lbs), I decided to bring along my 20″ external monitor as well as my Logitech Mouse and Keyboard combo. This too was an excellent decision as I was able to comfortably work with two screens (code editor on the 20″, documentation and debugging on the laptop display) for the entire period.

With everything ready to go, we watched as the Facebook team went over their intro, picked up a whole bunch of snacks, and then we were coding! Having decided to use the Tesseract OCR Library (specifically this wrapper for Android), Xander and I got to work understanding how to implement it while Jay and David worked through the Android tutorials to build a simple “Hello World” app and build the custom views we would need from there.

By the time Facebook had dinner rolling, we had managed to get the Tesseract Android Tools project compiled (as an NDK project – it required some special compiling), and communicating with a basic, one button app. For the next few hours after dinner, we worked to write the necessary code to take a picture on Android (using the camera API), import a picture (using the gallery API) and then send it to Tesseract for processing.

As we were coding away, Facebook staff was raffling away all sorts of nice gear in the IRC chat. I ended up winning a Facebook t-shirt (in addition to the standard Facebook Hackathon t-shirt)!

After the midnight sandwiches, Xander and David took a nap while Jay and I wrote out the functions that would add information to the calendar. We decided to try to simplify the OCR’ing that Tesseract had to do by providing it with a “box” at a time of data to process. This made sense for our application as we could just have the user draw a box around the fields that were needed in the calendar. We wrote out all of the code to draw the boxes and then scale that up to the actual picture but were not able to get Tesseract to correctly process the contents of a box. In fact, in all of our attempts, Tesseract threw some sort of exception, killing the entire app and making a mess of things.

Around breakfast time (8 AM – 5 hours till submissions), we decided to pull the plug on the project. We were at a point where Tesseract gave us inconsistent output when we provided it with an entire image and crashed out when we tried to segregate parts of the image only for processing. There was no way that we were going to be able to get any better results in the time remaining. We were all exhausted – it had been a very long night and we knew were not going to be able to get any further in the time remaining. As a team, we decided that we were done, dragged our gear back to the dorms and slept.

We learned that Tesseract is a rather temperamental software. Providing it with the exact same picture (through the gallery import) returned different results each time we tried it. Testing the same image on different hardware (Google Nexus 7, Sony Xperia Ion, Motorola Atrix II, Google Nexus One) resulted in different results as well. To date we are utterly perplexed at how Tesseract can possibly be functional considering how much difficulty we had with its results and how inconsistent it is. To be honest, we’re not sure if the problem is Tesseract or the wrapper written to use it in Android applications. More than likely, it is a simple exception that needs to be caught and dealt with instead of thrown. It is highly likely that we missed one of the optional arguments that adds some more stability.

Looking back, we took on a very ambitious project for a hackathon in an area that none of us had any familiarity. This was a mistake. We lost a lot of time trying to understand the basic Android workflow (even though only half the team was working on it). We were in over our head with Tesseract – we just didn’t have the familiarity with the API to build something useful.

I can tell you that we’re not finished yet! We’re cautiously optimistic that given enough time we can learn the nuances of Tesseract to get proper output. We have a few other image manipulation tricks we still want to try such as converting the image to gray scale before passing it to Tesseract. Sooner or later we will get back to this project and eventually it will be finished.

Overall, I thought the Hackathon was a very worthwhile experience. I had a lot of fun working under pressure, trying to bring this whole project together. Even though the end result is technically a failure, I don’t see it that way. It is a great stepping stone in our journey as software developers and a learning experience on rapid group projects. We did parts of it well (pulling a team together and setting up all of the collaboration tools) and didn’t do so well in other parts (picking a doable project), but in the end we learned from it and that’s what really matters. At our next hackathon (We’re going to Mhacks), at least we won’t make the same project definition mistakes.